-

Visualizing Elasticsearch Function Scores

Open Pen in new window / View code as Gist One of the most common questions we get from our WordPress VIP clients, many of whom are large media companies that publish constantly, is how they can bias their search results towards more recent content when scoring and sorting them. This type of problem is…

-

Balancing Kafka on JBOD

At Automattic we run a diverse array of systems and as with many companies Kafka is the glue that ties them together; letting us to shuffle data back and forth. Our experience with Kafka have thus far been fantastic, it’s stable, provides excellent throughput, and the simple API makes it trivial to hook any of our systems up to it.…

-

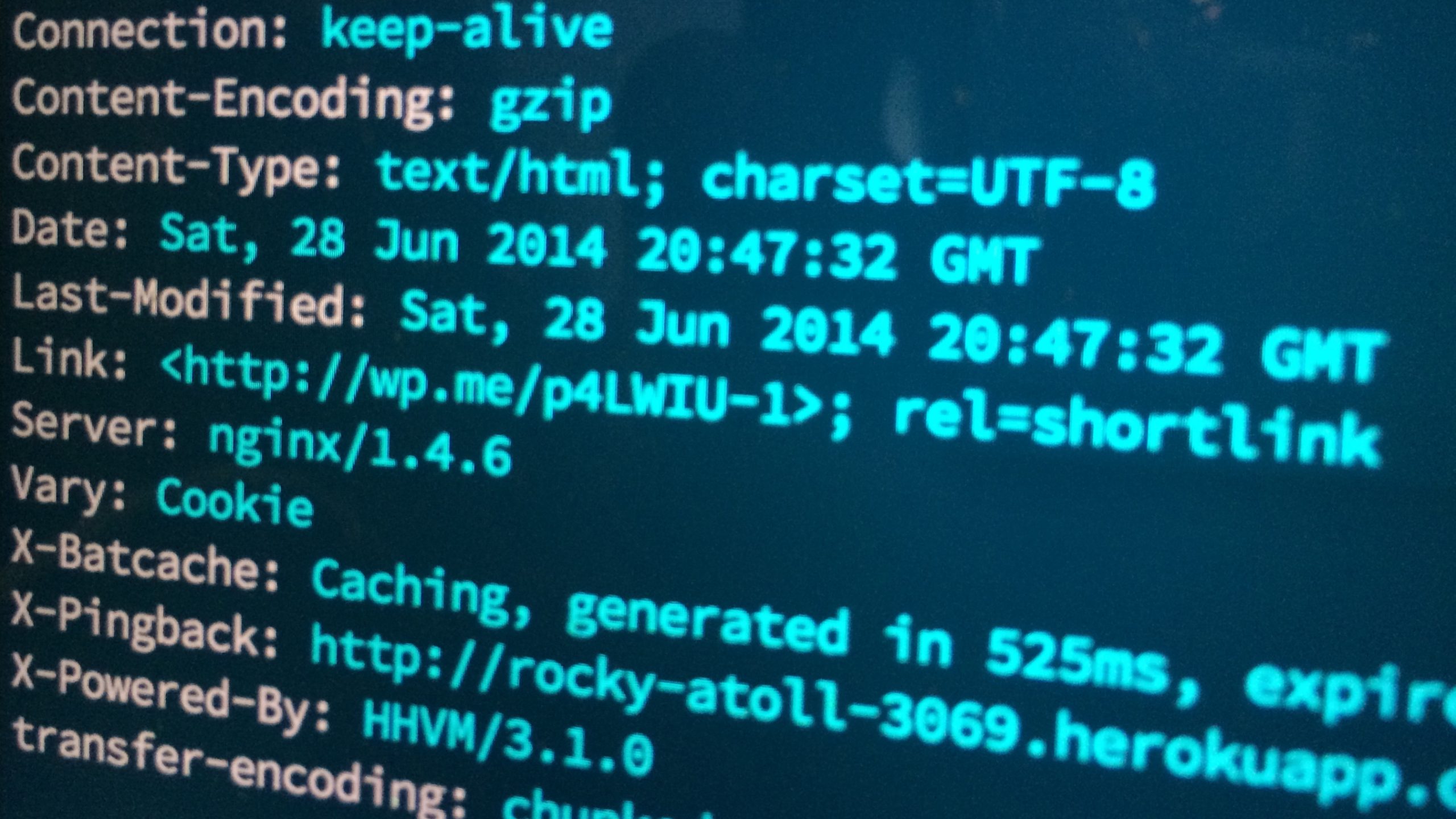

WordPress Performance with HHVM

With Heroku-WP I hoped to lower the bar in getting WordPress up and running on a more modern tech stack. But what are the performance implications of running WordPress on such a modern set of technologies? Surely it’s faster but by how much and is the performance gains worth the trouble? To answer that I’ve…

-

Introducing Whatson, an Elasticsearch Consulting Detective

Over the past few months I’ve been working with the Elasticsearch cluster at Automattic. While we monitor longititudinal statics on the cluster through Munin when something is amiss there’s currently not a good place to take a look and drill down to see what the issue is. I use various Elasticsearch plugins however they all…

-

Static Asset Caching Using Apache on Heroku

There’s been many articles written about how to properly implement static asset caching over the years and the best practices boil down three things. Make sure the server is sending RFC compliant caching headers. Send long expires headers for static assets. Use version numbers in asset paths so that we can precisely control expiration.